Simple Web Scraper Notifications

Dan Willoughby・ Feb 17, 2020・3 min read

My brother-in-law recently completed training to become a pilot and was starting his search for a job. He had been waking up early everyday in hopes of finding a job posting where he currently lived. He did this for weeks, except for one day. Turns out, that was the day the posting went up.. Go figure.

I had made Pushback as side project and thought it'd be a perfect way to help him out. Pushback is an easy way to send notifications to your iOS or Android phone. It can be used with any programming language such as python, ruby, java, or javascript. You can check out some of the docs. It just needs one http request to get started.

I decided to keep it simple and web scrape the job posting site with curl and grep. Sometimes this won't work because the website you're scraping has a bunch of javascript. In this case, it was fine because the site was a simple html page.

One-liner

The first iteration of the web scraper was done all on one line. It fetched the website with curl and then piped to grep to find 'DPA'. The final step was to send a notification. Using && allowed bash to run the next curl command if grep found 'DPA' and otherwise it would exit. Here is the complete command:

curl -s https://secure.atpflightschool.com/cfi-job/ | grep -q DPA && curl https://api.pushback.io/v1/send -u at_token: -d 'id=Channel_505' -d 'title=DPA found' -d 'body=Found a posting with DPA!Beefed up version

This command did the job pretty well, but I wanted to guard against some failures. I was specifically worried about my curl request getting blocked for requesting too often. I beefed up the one-liner to check for exit codes. If the curl command failed to capture the job posting website, it would exit with a non-zero and I had the script notify me in a different Pushback channel. Otherwise it would notify my brother-in-law about his job posting. Making these different channels was pretty simple with Pushback and I only had to change what id to send to a different channel.

I also added some stuff that the website had done to prevent caching such as sending a UUID with each request. I'm not sure if this matters with curl, but I threw it in anyway because it was pretty simple.

Here is the complete script:

#!/bin/bash

# simple-scrap.sh

CITY=DPA

# UUID is not required, but it's what the site was doing, so why not

UUID=$(ruby -e 'require "securerandom"; puts SecureRandom.uuid')

JOB=$(curl -s "https://secure.atpflightschool.com/cfi-job/default.lasso?reload=$UUID")

EXIT=$?

if [ $EXIT != 0 ]; then

# Notify if we failed to fetch the website

curl https://api.pushback.io/v1/send -u at_token: -d 'id=Channel_505' -d 'title=Non zero exit code' -d "body=Exit: $EXIT"

fi

$(echo "$JOB" | grep -q "$CITY")

if [[ $? == 0 ]]; then

# Notify the job posting was found.

curl https://api.pushback.io/v1/send -u at_token: -d 'id=Channel_827' -d "title=$CITY found" -d "body=Found a posting with $CITY!"

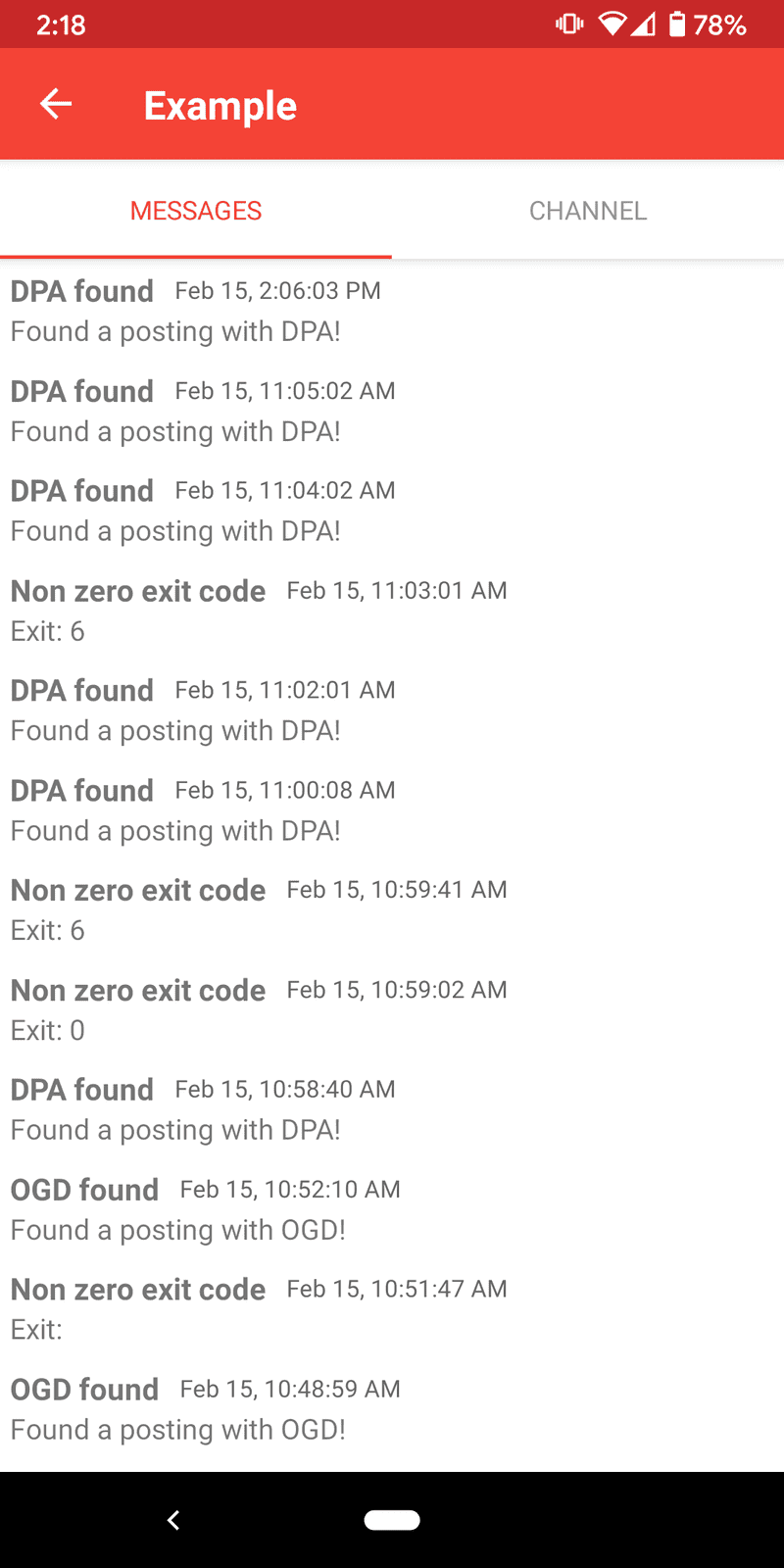

fiI sent a few test messages by changing which city the script was looking for. The messages showed up as push notifications and also appeared in the Pushback app's history. The history was also available on the web version of Pushback, which was also pretty neat.

Setting up a cronjob

I made a cronjob to run simple-scrape.sh every minute. On a Linux terminal you can type crontab -e and it'll open an editor. Then enter the following:

# Run every minute

* * * * * /usr/local/bin/simple-scrap.shWith a little bit of bash and using Pushback, I was able to get a simple web scraper up and running pretty quickly. My brother-in-law thought it was awesome and was really grateful.

WRITTEN BY

Dan Willoughby